Building SVMs from Scratch in C++: Glacier’s PEGASOS Implementation

Glacier.ML now includes working implementations of:

- SVM Classifier

- SVM Regressor

Both are implemented in C++ using:

- Eigen for linear algebra

- OpenMP for multithreading

- OpenBLAS for optimized BLAS routines

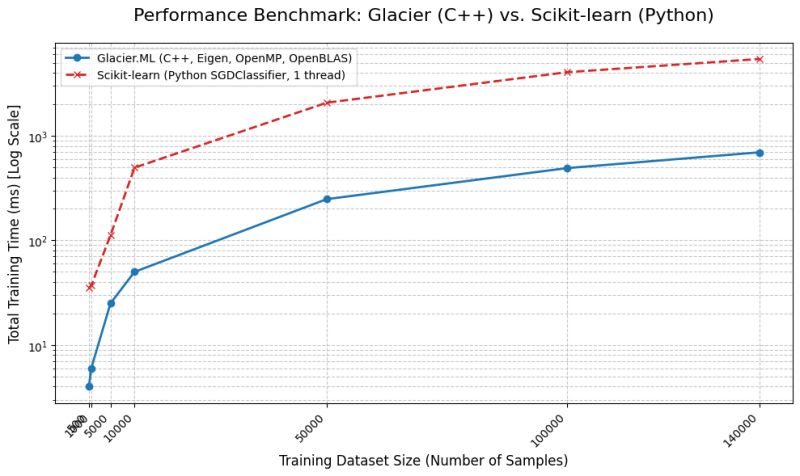

In local benchmarks, the classifier variant demonstrates 4–10× faster training compared to scikit-learn under comparable conditions. This post documents the algorithmic design, performance characteristics, and implementation philosophy behind these models.

Algorithmic Clarification: Not Classical Kernel SVM

Glacier’s SVM implementations are not dual-form, kernelized SVMs.

They are based on:

PEGASOS

(Primal Estimated sub-GrAdient SOlver for SVM)

PEGASOS directly optimizes the primal objective using stochastic subgradient descent.

Classification Objective

\[\min_{w} \frac{\lambda}{2} \|w\|^2 + \frac{1}{m} \sum_{i=1}^{m} \max\left(0,\; 1 - y_i\, w^{T} x_i\right)\]Regression Objective (ε-insensitive loss)

\[\min_{w} \frac{\lambda}{2} \|w\|^2 + \frac{1}{m} \sum_{i=1}^{m} \max\left(0,\; |y_i - w^{T} x_i| - \epsilon\right)\]This places Glacier’s SVM variants closer in spirit to:

SGDClassifierSGDRegressor

rather than SVC with kernel tricks.

The models are strictly linear. No feature mapping or kernel transformation is performed.

Implementation Stack

1. Eigen + OpenBLAS

- Eigen provides matrix abstractions and expression templates.

- OpenBLAS accelerates low-level BLAS operations.

Key goals:

- Cache-friendly layouts

- Minimal heap allocations

- Efficient vectorized dot products

- Reduced abstraction overhead

The implementation avoids unnecessary temporaries and keeps updates memory-local wherever possible.

2. OpenMP Threading Strategy

Glacier uses half of the available hardware threads by default.

This is deliberate.

Instead of saturating the CPU:

- Thermal throttling is reduced.

- Sustained performance is more stable.

- System responsiveness is preserved.

In contrast, scikit-learn often utilizes all available threads depending on the BLAS backend.

Thread policy alone can significantly affect observed training time.

Performance Observations

Speed

The classifier model showed 4–10× faster training compared to scikit-learn in local tests.

Important caveats:

- The implementations are not identical.

- Threading strategies differ.

- Memory allocation patterns differ.

- Learning rate scheduling may differ.

This is not a strict apples-to-apples benchmark.

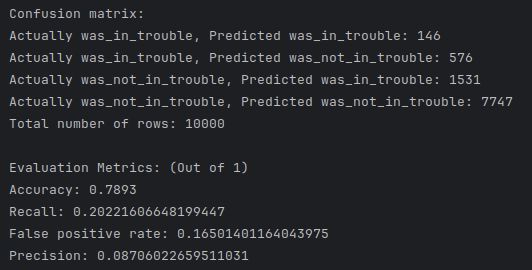

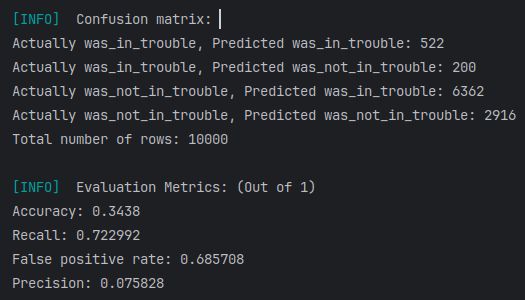

Underfitting on “Give Me Some Credit”

Both Glacier and scikit-learn underfit on the “Give Me Some Credit” dataset.

Evidence:

- Weak recall on minority classes

- Confusion matrices indicating insufficient separation

Reason:

- Linear decision boundary

- No kernel expansion

- No aggressive hyperparameter tuning

For datasets that require non-linear decision surfaces, linear SVMs predictably underperform.

This behavior is expected.

Architectural Decisions

Core design choices:

- Use primal optimization (PEGASOS)

- Avoid kernel complexity at this stage

- Emphasize hardware-level control

- Optimize CPU usage consciously

- Maintain explicit control over memory and threading

The implementation is not a transcription of any single source. It is the result of iterative derivation, experimentation, and refinement.

Learning With AI vs Copying From AI

All mathematical derivations and implementation details were accelerated using AI systems.

The distinction between learning and copying lies in:

- Ability to re-derive the objective function independently

- Ability to reimplement from scratch without reference

- Understanding convergence behavior

- Understanding regularization effects

- Understanding memory and threading trade-offs

If the entire library can be rewritten from first principles with full conceptual clarity, ownership is real.

AI served as:

- A compression mechanism for textbook latency

- A refinement engine

- An error detector

- A boundary expander

The architectural direction and final decisions remained deliberate.

Systems-Level Insights

Building PEGASOS from scratch forces attention to:

- Memory bandwidth limits

- Threading effects

- Numerical stability in subgradient descent

- Learning rate decay scheduling

The exercise places focus on algorithm theory while emphasising on hardware-aware optimization.

Next Logical Increment

The next structural jump is CUDA acceleration.

Potential benefits:

- Large-scale mini-batch updates

- Faster convergence on high-dimensional data

- Experimental work in GPU-accelerated optimization

This is deferred until completion of defined minor project objectives.

Conclusion

Glacier’s SVM implementations:

- Use PEGASOS primal optimization

- Are fully implemented in C++

- Leverage Eigen, OpenMP, OpenBLAS

- Demonstrate competitive speed

- Underfit predictably on non-linear datasets

- Reflect system-aware engineering decisions

The value of Glacier lies not in outperforming scikit-learn universally, but in building vertical control:

From math

To memory

To threads

To execution

That control compounds.

Co-written using AI